If you're rocking a newer 8-core or better, then forget the hardware encoders and use x264/x265 with a Medium or slower preset, as the speed difference isn't likely to be a big factor, and the slower presets will allow for improved efficiency. For hardware encoders such as NVENC, you want to use the highest quality settings to get the best efficiency out of them. If size is important, I'll push that a bit higher. I typically have the encoder set to Quality (for best efficiency), with Constant Quality set between 22-26, depending on the video itself. Enough so that I often do my encoding on the laptop over my, admittedly pretty old, desktop.

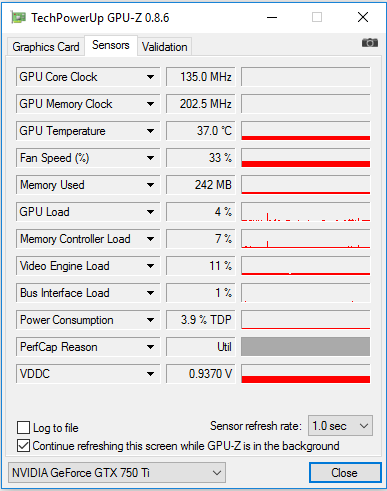

Tbh, I found the Haswell gen Quicksync to be slightly better.Ĭan't say anything about the newest Nvidia cards, but the newer Intel Quicksync encoders (using 11th gen Tiger Lake) I've been pretty impressed with. So I often stuck with x265 for my non-realtime encoding needs. Nvidia NVENC (short for Nvidia Encoder) is a feature in Nvidia graphics cards that performs video encoding, offloading this compute-intensive task from the CPU to a dedicated part of the GPU.On the GTX 960 (which uses a newer NVENC encoder than the rest of the Maxwell series), I wasn't especially impressed by the encoding efficiency. It was introduced with the Kepler-based GeForce 600 series in March 2012. The encoder is supported in many livestreaming and recording programs, such as vMix, Wirecast, Open Broadcaster Software (OBS) and Bandicam, as well as video editing apps, such as Adobe Premiere Pro or DaVinci Resolve. It also works with Share game capture, which is included in Nvidia's GeForce Experience software. Ĭonsumer targeted GeForce graphics cards officially support no more than 3 simultaneously encoding video streams, regardless of the count of the cards installed, but this restriction can be circumvented on Linux and Windows systems by applying an unofficial patch to the drivers. Doing so also unlocks NVIDIA Frame Buffer Capture (NVFBC), a fast desktop capture API that uses the capabilities of the GPU and its driver to accelerate capture. Professional cards support between 3 and unrestricted simultaneous streams per card, depending on card model and compression quality. You can also try H.264 (x264) and use your CPU, it might be just as fast. That should get you your target bitrate and good speed. 1.8 Eighth generation, Ada Lovelace AD10x.1.6 Sixth generation, Turing TU10x/TU116.1.5 Fifth generation, Volta GV10x/Turing TU117.Nvidia chips also feature an onboard decoder, NVDEC (short for Nvidia Decoder), to offload video decoding from the CPU to a dedicated part of the GPU. For GPU encoding you would choose H.264 (AMD VCE) and then avg bitrate to 10,000-12,000kbps, keep 2 pass and 1st pass turbo checked.

(In H.264, NVENC always has B Frame support, max 4096x4096 resolution, and max 8-bit depth) NVENC has undergone several hardware revisions since its introduction with the first Kepler GPU (GK104). The first generation of NVENC, which is shared by all Kepler-based GPUs, supports H.264 high-profile (YUV420, I/P/B frames, CAVLC/CABAC), H.264 SVC Temporal Encode VCE, and Display Encode Mode (DEM). NVidia's documentation states a peak encoder throughput of 8× realtime at a resolution of 1920×1080 (where the baseline "1×" equals 30 Hz). Actual throughput varies on the selected preset, user-controlled parameters and settings, and the GPU/memory clock frequencies.

The published 8× rating is achievable with the NVENC high-performance preset, which sacrifices compression efficiency and quality for encoder throughput.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed